How Health Plans Are Scoring AI-Enabled Vendors in 2026

Most vendors are positioning around their technology. Plans are scoring six other things.

Upward Growth provides health tech leaders with the playbooks and proof to transform complex markets into real growth. Each week, we deliver clear, practical strategies on positioning, messaging, and growth, so leaders can close enterprise deals and build repeatable momentum.

🤝 Work with Ryan on payor growth strategy: Contact me

🟦 Connect with the author, Ryan Peterson, on LinkedIn.

📰 Newsletter sponsorships are available: Learn More

Today’s Upward Growth newsletter is sponsored by Ours Privacy

As digital health companies scale, performance marketing gets more complex, and so do privacy expectations. What many teams don’t realize is that common website analytics and advertising tools can unintentionally capture sensitive information, whether you’re running patient-facing campaigns or operating a B2B site with educational health information.

Ours Privacy helps healthcare organizations use platforms like Google and Meta responsibly by filtering, redacting, or anonymizing sensitive data before it’s shared with third parties with our HIPAA-compliant customer data platform. Growth teams gain the performance insights they need, while engineering and compliance teams gain confidence in how data is handled.

Upward Growth readers can request a complimentary healthcare website compliance scan to better understand potential compliance gaps.

Interested in sponsoring Upward Growth? Learn more

Two years into the AI gold rush in health tech, health plan evaluation committees have adapted. They've rebuilt their scoring criteria, tightened their compliance requirements, and developed a sharper filter for separating real capability from marketing language. With AI-enabled startups capturing 62% of all digital health VC dollars in the first half of 2025 and most of that money directed at payor buyers, plans have seen every version of "we use AI to automate X" and "we built proprietary models." They've stopped being impressed by the technology pitch.

Selling an AI product to a health plan often involves an 8-to-15-month enterprise sales cycle that spans clinical evaluation, compliance review, financial justification, IT assessment, and procurement. Your AI is what gets you into the clinical conversation, but the plan’s purchasing decision depends on the other four stages, where technology barely comes up, and where most vendors have done the least amount of work. The technology gets you in the room. Compliance, financial justification, and evidence are what keep you there.

This article is the positioning and sales strategy work that fills those gaps. If you’re preparing to bring an AI product to MA plans, Medicaid MCOs, or commercial health plans, what follows is how to build the financial case, the compliance readiness, and the evidence package that health plan buyers actually need to move a deal forward.

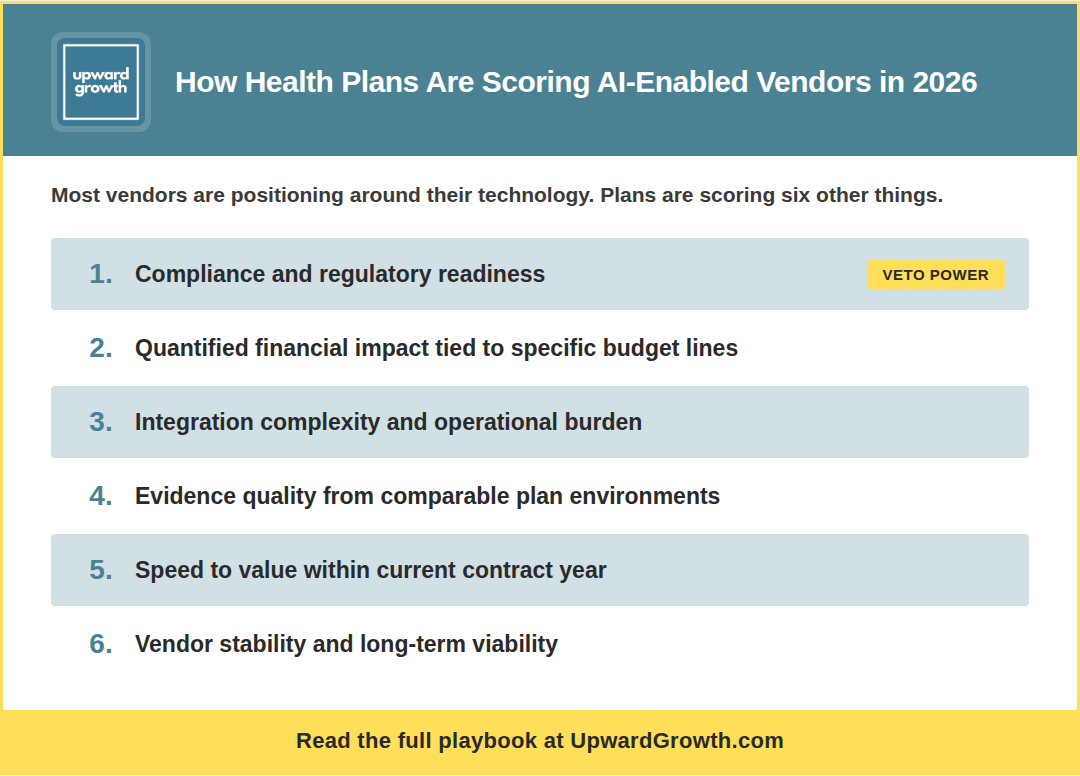

What’s Actually on the Plan’s AI Vendor Scorecard

Based on conversations with health plan executives and the vendors I advise, most AI evaluations at plans die in one of three places: compliance review, financial justification, or operational feasibility. The vendor almost never finds out which one killed them because the plan just stops responding.

Here’s how plan evaluation committees are weighing AI vendor decisions right now, roughly rank-ordered by influence on whether a deal moves forward:

Compliance and regulatory readiness (heaviest weight). If your AI product can’t clear the plan’s compliance review, nothing else in your pitch matters. Plans are evaluating your model governance, audit trails, data handling, and human oversight protocols against their internal standards and, increasingly, CMS’s proposed requirements for AI in MA. A vendor can have the support of every other stakeholder and still die here.

Quantified financial impact tied to specific budget lines. Not “ROI” in the abstract. Plans want to see which budget category your product affects (medical expense, administrative cost, quality incentive spend), by how much in PMPM terms, and within what time horizon relative to their fiscal calendar. If your financial case can’t survive CFO scrutiny, clinical evidence alone won’t save it.

Integration complexity and operational burden on the plan. Plans are weighing how much they’d have to change internally to use your product, and they’re doing it from the first meeting. If your AI requires a six-month integration project, three FTEs on the plan side, and a rip-and-replace of their existing care management workflow, you’ve priced yourself out of the conversation before you’ve even discussed price.

Evidence quality and comparability. Plans discount evidence that doesn’t come from an environment that resembles theirs. Pilot data from a comparable plan (similar size, line of business, member population) carries the most weight. Generic accuracy metrics from dissimilar settings carry the least. The specificity of your evidence matters more than the volume of it.

Speed to value. Products that deliver measurable impact within the current contract year score higher than products whose ROI depends on next year’s Stars bonus or risk adjustment settlement. In MA, especially, bid assumptions and CMS benchmark changes can shift the math between years, so plans heavily discount future-year payback.

Vendor stability and long-term viability. Plans are asking about funding, acquisition risk, and operational maturity more than they used to, because they’ve been burned by vendors who didn’t survive long enough to deliver on their promises.

Notice what’s not on this list: your model architecture, your training data size, your accuracy benchmarks in isolation, or how “agentic” your system is. Those may matter to your engineering team, but they barely register on the plan’s evaluation scorecard.

The rest of this article gives you the playbook for the three criteria you can most directly control: financial impact, compliance readiness, and evidence quality.

The technology gets you in the room. Compliance, financial justification, and evidence are what keep you there.

How to Build the Financial Case That Survives the CFO’s Review

Quantified financial impact is where vendors leave the most value on the table. Every vendor evaluation at a plan ultimately comes down to a specific set of financial and quality metrics: PMPM cost, medical loss ratio, administrative cost ratio, Stars and HEDIS measures, risk adjustment accuracy, and member retention. If your AI product doesn’t connect to at least one of those in specific, quantified terms, you’re asking the health plan to make assumptions that they probably won’t.

Here’s a five-step process for building that translation.

Step 1: Identify which of the plan’s core financial levers your product touches. There are really only five: medical expense reduction, administrative cost reduction, quality incentive revenue protection (Stars bonuses), risk adjustment revenue protection, and member retention (which affects all of the above). Pick the one or two that your product most directly affects. If you say “all of them,” you haven’t been specific enough.

Step 2: Map your clinical or operational outcome to the specific budget line. “We improve medication adherence” maps to medical expense reduction (fewer avoidable hospitalizations) and quality incentive revenue (Stars medication adherence measures). “We identify undocumented HCCs” maps to risk adjustment revenue protection. Plans need to see the causal chain, not just the endpoint.

Step 3: Quantify using the plan’s own benchmarks, and know where to find them. CMS publishes MA benchmark rates, risk adjustment factors, and Stars bonus thresholds annually. State Medicaid agencies publish rate-setting data. Plans file public financial statements showing their medical loss ratio (MLR) and administrative cost ratios. In a market where dozens of AI vendors are targeting payer operations simultaneously, building your financial case with numbers the plan already recognizes is one of the fastest ways to separate yourself from the pack.

Step 4: Build the ROI at three attainment levels. CFOs don’t trust base-case projections. Show what happens at 100%, 70%, and 50% of your projected outcome. If your ROI still works at 70% attainment, say so. If it doesn’t work below 85%, be honest about that too, because the CFO will run the math themselves, and you’d rather control the narrative.

Step 5: Tie the payback to the plan’s fiscal calendar. Does the plan see impact in the current contract year (CY), or does ROI depend on next year’s Stars bonus? For MA, this means understanding when Stars measurement periods close, when risk adjustment submissions are due, and when bid assumptions lock. For Medicaid, it means aligning with the state’s rate-setting cycle. Plans heavily discount future-year payback, so a product that delivers ROI in the current contract year is a fundamentally stronger financial proposition.

As an example, consider a vendor selling an AI-powered predictive model to identify high-risk members:

The technology pitch is "Our model predicts which members will have an avoidable hospitalization within 90 days with 87% accuracy."

The plan economics version of that same product: "We reduce avoidable inpatient admissions for a 300K-member MA plan by flagging high-risk members early enough for care management intervention, saving an average of $1.80 PMPM in medical expense. That's roughly $6.5M in annual savings against an annual cost of $1.2M. At 70% attainment, that's still $4.5M in savings within the current contract year."

Financial justification gets you through the CFO’s review, but the criterion that carries the heaviest weight and kills more AI deals than any other is compliance. Let’s review that next.

Why the Vendors Winning AI Deals Are Leading With Compliance

The vendors I’m working with who are closing AI deals at plans right now are leading with compliance readiness from the first meeting, while most of their competitors are still treating it as the last boxes to check before a deal can close.

The regulatory environment is forcing this shift, as CMS has proposed new guardrails for AI use in Medicare Advantage, including a broad definition of “automated systems” that could cover almost any AI-assisted process a vendor might sell. The proposed rule for Contract Year (CY) 2026 would require MA organizations to ensure equitable access regardless of whether services are delivered through human or automated systems, and CMS is claiming audit authority to enforce it. Plans are asking compliance questions they weren’t asking 12 months ago, and almost no vendor is prepared to answer them. That’s your opening.

Start by bringing your compliance lead into the conversation early, ideally by the first or second meeting. When the plan’s evaluation team sees a named person who can answer model governance, data handling, and audit trail questions directly, it surfaces the plan’s compliance requirements while you still have time to build toward them instead of scrambling to address them in month four. It also signals operational maturity that most competitors can’t match, because most send their sales rep to handle compliance questions by referencing an FAQ.

Before the plan even sends its security questionnaire, pre-populate it. Most plans use a standardized security questionnaire or their own proprietary vendor assessment. or their own proprietary vendor assessment, and if you’ve sold to plans before, you know what 80% of these questions are. Fill it out proactively and attach it to your second meeting follow-up with a note: “We know this is coming. Here’s a head start.” That move alone can cut weeks off your procurement timeline.

Then get your compliance lead on a call with the plan’s compliance lead in the first two weeks, before the contract is drafted and before procurement is involved.

Surface the hard questions when you still have time to address them:

-How do you handle model drift?

-How do you ensure equitable outcomes across member populations?

-What's your human-in-the-loop protocol for decisions that affect member care or benefits?

These compliance reviews routinely add 4-8 weeks to procurement timelines, which is exactly why the vendors who front-load this work close faster. That kind of delay is becoming standard for AI products, and it’s even more pronounced in Medicaid, where MCOs face a patchwork of state-level AI requirements on top of federal ones.

But even with strong financials and compliance readiness, the plan still needs to believe your AI will work in their environment, and that's a harder question to answer than most vendors expect because the evidence you've built with other clients may not translate directly to the plan sitting across the table.

The Evidence Problem Every AI-Enabled Vendor Faces With Payors

Health plans are skeptical buyers, and not because of AI specifically. They’ve been burned by health tech promises for over a decade, from care management tools that required three FTEs to maintain to analytics products that generated buy-up dashboards nobody used. The credibility gap in health tech sales is deep, and every plan buyer remembers the vendor solutions they championed that didn’t work.

Frankly, AI makes this worse. Health plans have seen enough demos (AI or otherwise) to know that performance in a controlled environment doesn’t always hold up against their messy, incomplete claims and eligibility files. And with “AI-powered” now the most overused phrase in health tech, applied to everything from genuine machine learning systems to basic rules engines with a chatbot bolted on, plans have learned to discount the label entirely.

The evidence that actually moves plan evaluations forward follows a clear hierarchy:

A live, referenceable client at a comparable plan carries the most weight, and by referenceable, I mean a real customer running your product in production who’s willing to answer hard questions about implementation, data quality, and results. Comparable matters here: a 50K-member Medicaid MCO in the Southeast doesn’t validate your product for a 500K-member MA plan in California, and your three MA clients may not help when you’re pitching a Medicaid MCO for the first time.

Beyond a referenceable client, plans give credit to independent evaluations and published case reviews, but the baseline expectation is that you can show internal data with methodology transparent enough for the plan’s analytics team to evaluate. Peer-reviewed research is a nice-to-have, but plans won’t wait years for it.

The real challenge is that your existing evidence may not match the specific plan you’re pursuing right now. Here’s how to close that gap.

Build a retrospective analysis using the plan’s own publicly available data. CMS publishes plan-level Stars data, risk adjustment scores, and quality measure performance. Pull the prospect’s specific numbers and run your model against their publicly reported baseline to build credibility that’s specific to their environment.

Structure your engagement around pre-agreed success metrics. “If we hit X on measure Y within 60 days using a subset of your member population, we move to a full contract. If we don’t, you’ve lost nothing.” Pre-agreeing on the metrics eliminates the “we’ll evaluate the results and get back to you” death spiral and gives the plan’s champion a concrete framework to take back to their leadership.

And bring a clinical advisor or plan-side reference who can speak to how your approach performs with the kind of member population, line of business, or operational environment this plan manages. The evaluation committee will weigh that voice differently than anything your sales team presents.

Those three elements, plan-specific evidence, a structured engagement with defined success criteria, and a compliance contact who can speak to model governance, are what plans need to move forward. The more specific each one is to the plan you’re pursuing, the faster the deal moves.

That’s the strategic framework: how plans score you, how to translate your AI into their financial language, how to lead with compliance, and how to build the right evidence package for the plan in front of you. Below is the tactical layer: a credibility checklist for your plan-facing materials, a three-question script that surfaces your buyer’s real concerns, and a positioning template that translates any AI capability into plan-ready language.

Paid subscribers get this section plus the full archive of frameworks, scripts, and deep-dives.

🔒 Upgrade to a paid subscription to keep reading.